BigQuery Prerequisites

Prerequisites

- By default, BigQuery authentication uses role-based access. You will need the data-syncing service's service account name available to grant access:

[email protected].

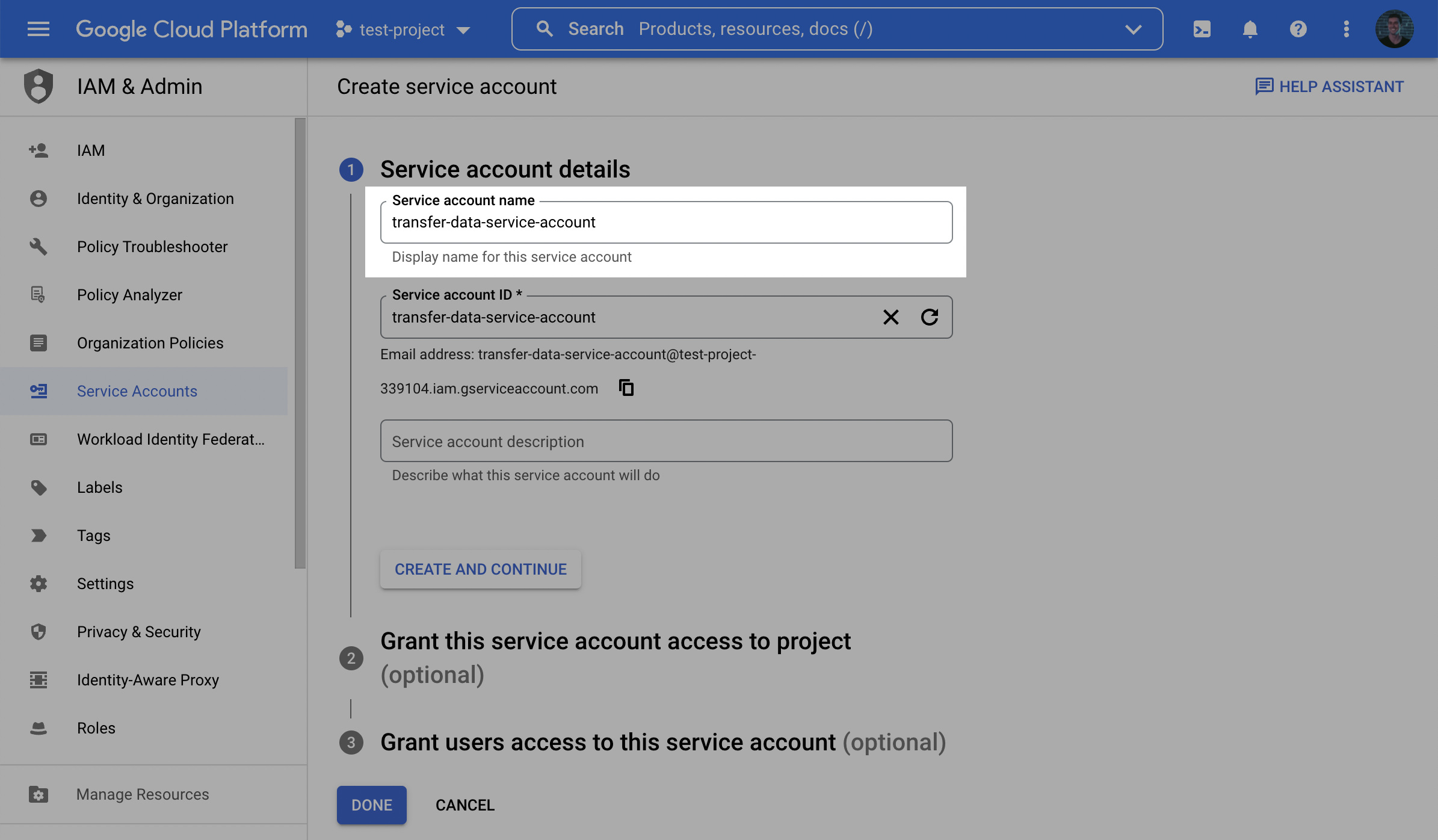

Step 1: Create service account in BigQuery project

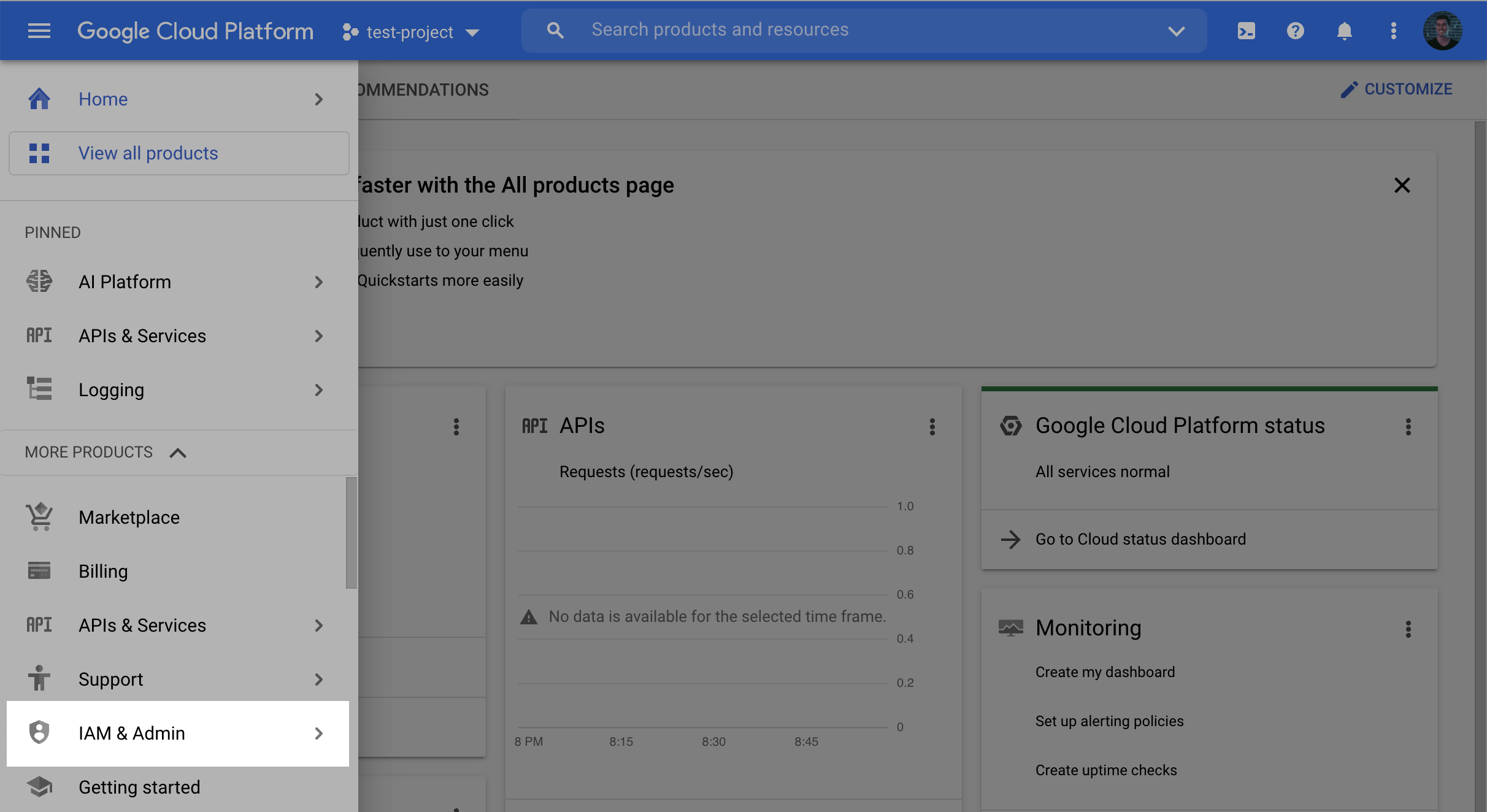

- In the GCP console, navigate to the IAM & Admin menu.

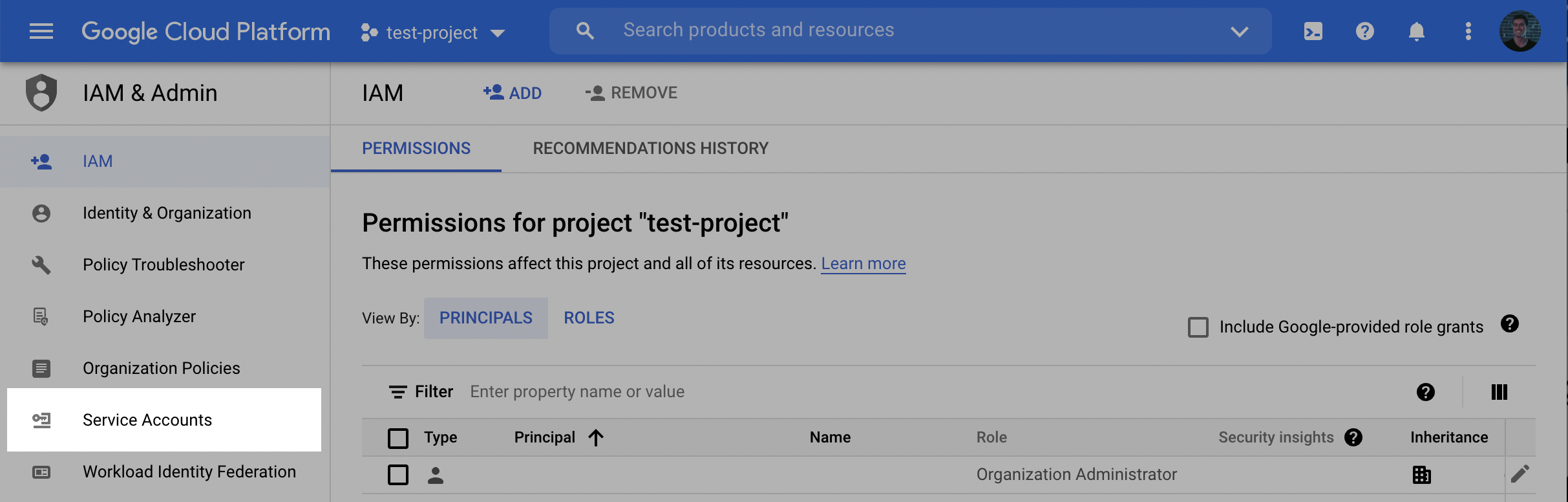

- Click into the Service Accounts tab.

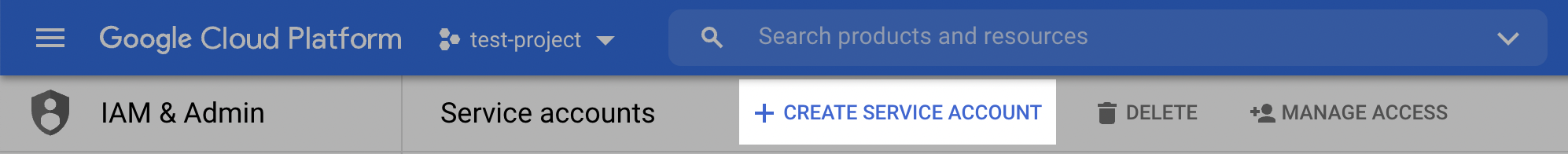

- Click Create service account at the top of the menu.

- In the first step, name the user and click Create and Continue.

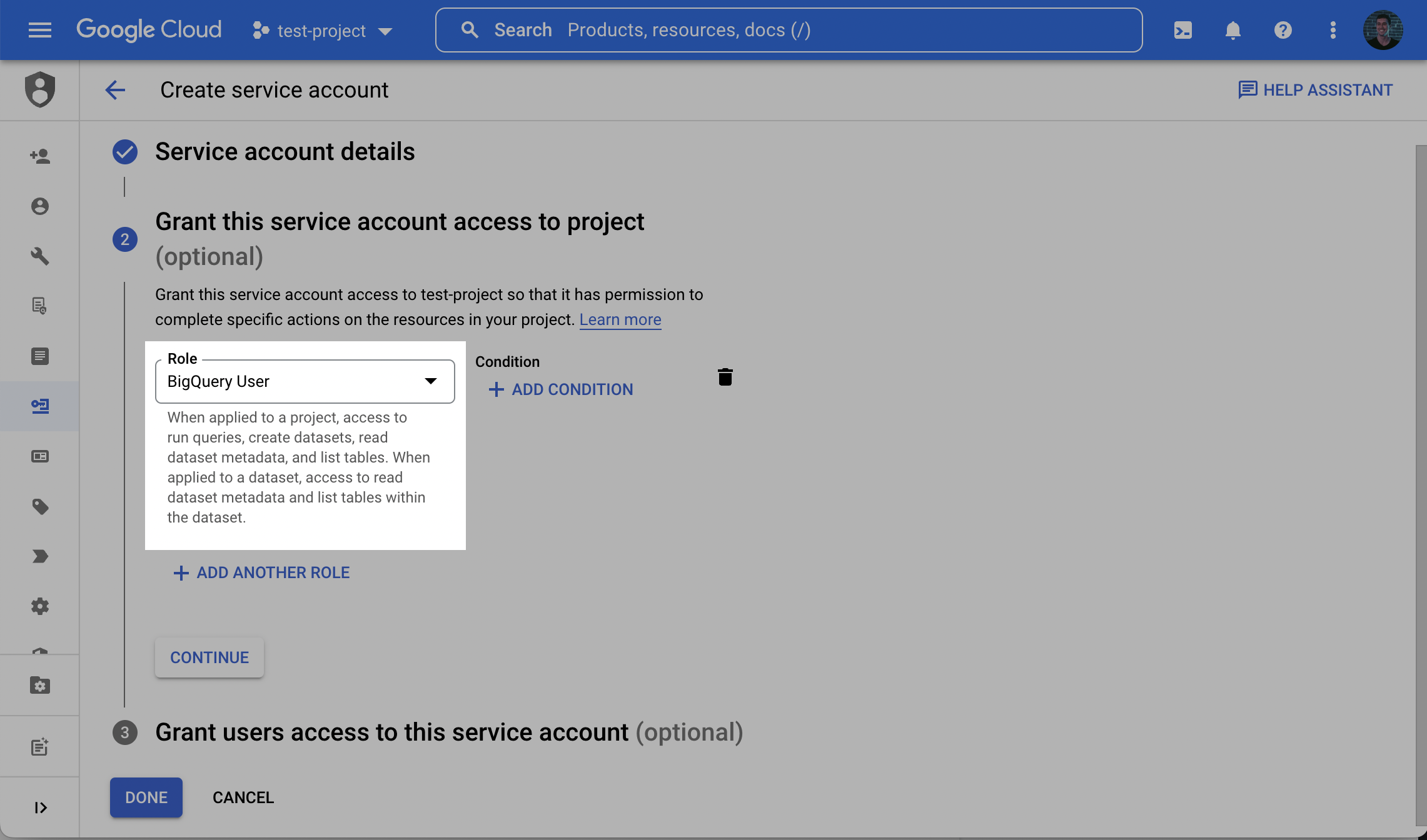

- In the second step, grant the user the role BigQuery User.

Loading data into a dataset that already exists

By default, we will attempt to create a new dataset (with a name you provide) in the BigQuery project. If instead you create the dataset ahead of time, you will need to grant the BigQuery Data Owner role to this Service Account at the dataset level.

- In BigQuery, click on the existing dataset. In the dataset tab, click Sharing, then Permissions. Click Add Principals. Enter the Service Account name, and add the Role: BigQuery Data Owner

- In the third step (Grant users access to this service account step), within the Service account users role field, enter the provided Service account (see prerequisite) and click Done.

- Once successfully created, search for the created service account in the service accounts list, click the Service account name to view the details, and make a note of the email (note: this is a different email than the service's service account).

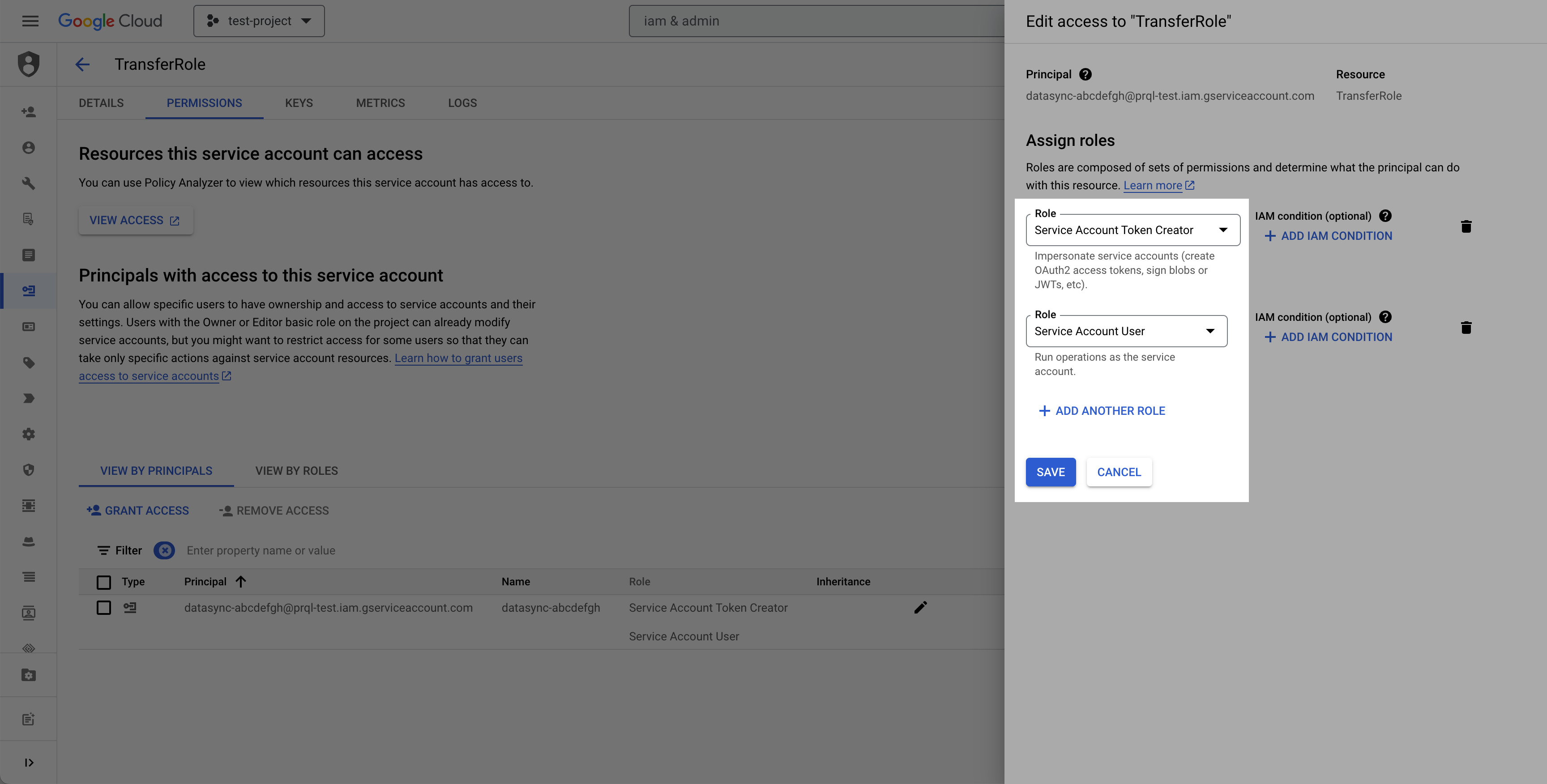

- Select the permissions tab, find the provided principal name (Service account from the prerequisite), click the Edit principal button (pencil icon), click Add another role, select the Service Account Token Creator role, and click Save.

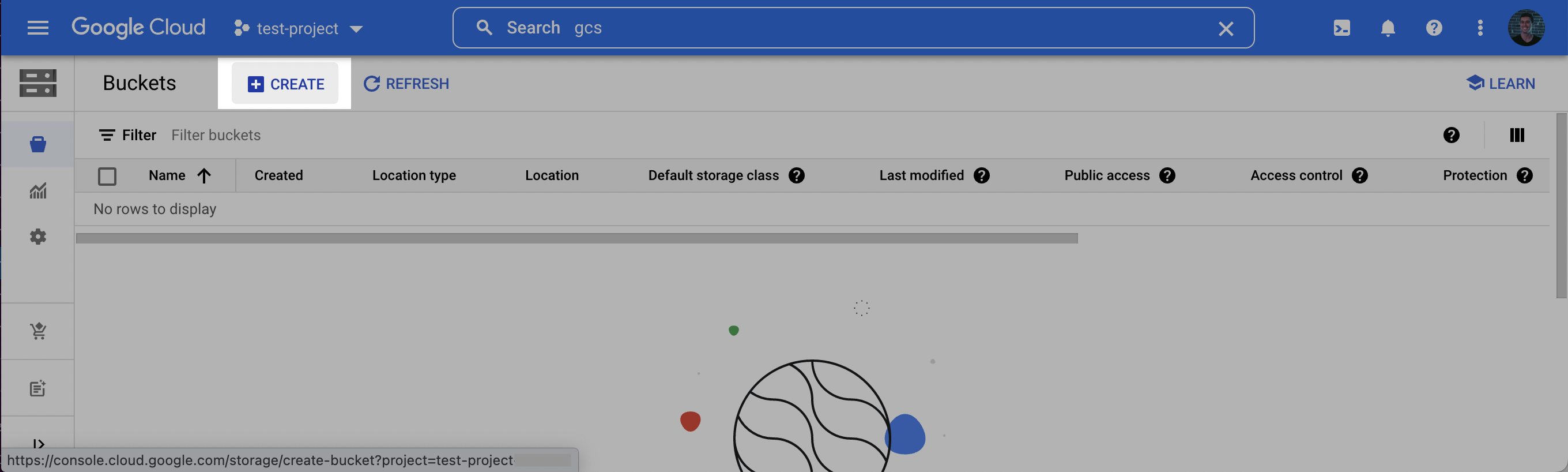

Step 2: Create a staging bucket

- Log into the Google Cloud Console and navigate to Cloud Storage. Click Create to create a new bucket.

- Choose a name for the bucket. Click Continue. Select a location for the staging bucket. Make a note of both the name and the location (region).

Choosing a

location(region)The location you choose for your staging bucket must match the location of your destination dataset in BigQuery. When creating your bucket, be sure to choose a region in which BigQuery is supported (see BigQuery regions)

- If the dataset does not exist yet, the dataset will be created for you in the same region where you created your bucket.

- If the dataset does exist, the dataset region must match the location you choose for your bucket.

- Click continue and select the following options according to your preferences. Once the options have been filled out, click Create.

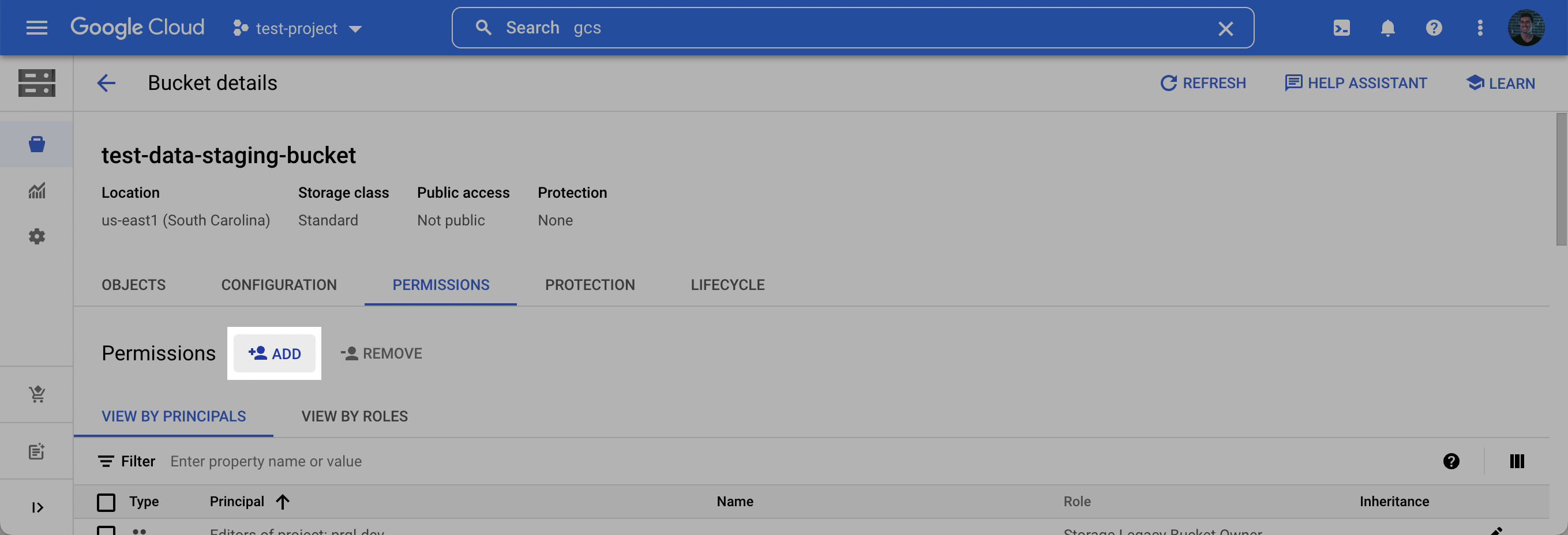

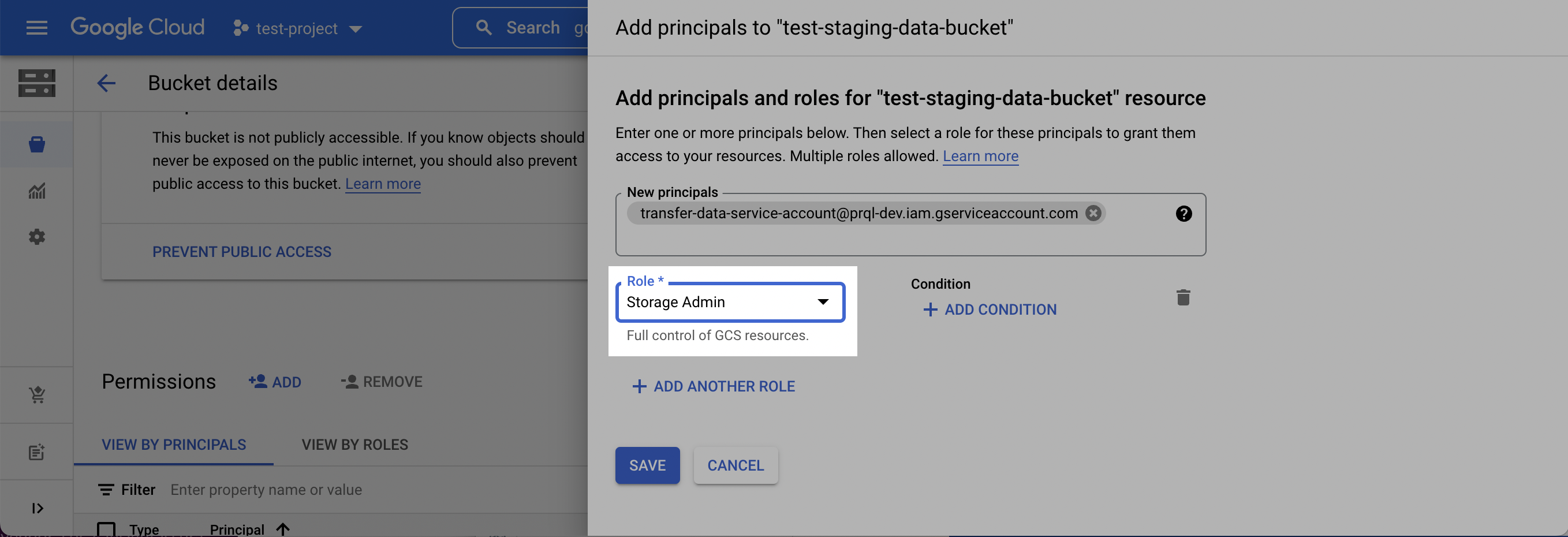

- On the Bucket details page that appears, click the Permissions tab, and then click Add.

- In the New principals dropdown, add the Service Account created in Step 1, select the Storage Admin role, and click Save.

Step 3: Find Project ID

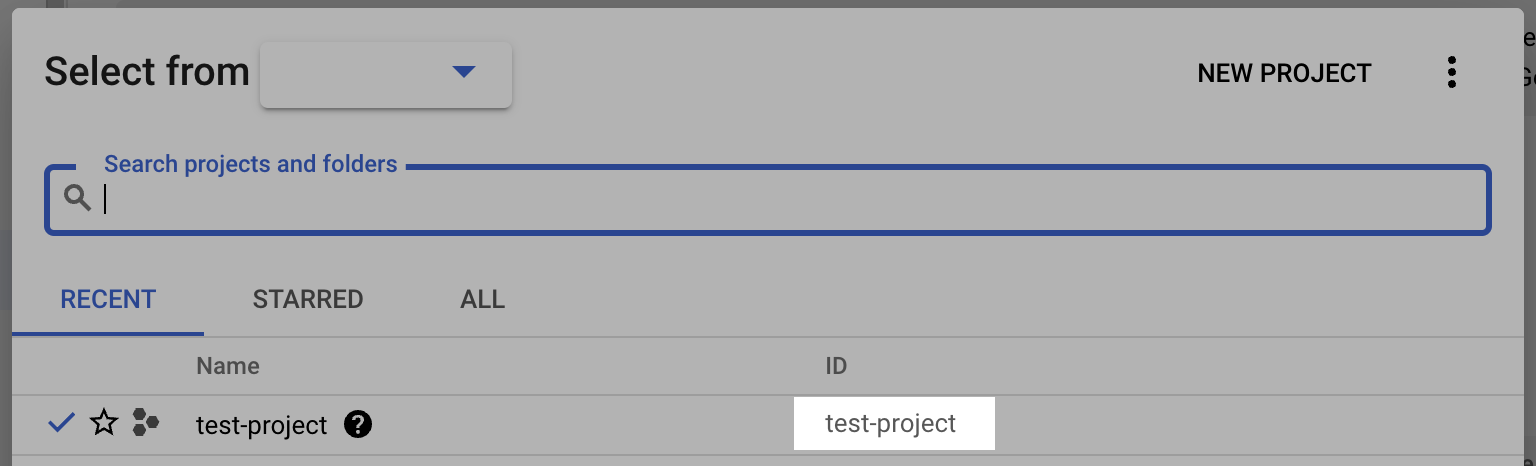

- Log into the Google Cloud Console and select the projects list dropdown.

- Make note of the BigQuery Project ID.

Step 4: Gather the required setup information

For the data export setup, you will need:

- Project ID

- Bucket Name

- Bucket Location

- Dataset name

- Service Account Name

Visit the LogRocket Streaming Data Export settings page to complete the setup.

Updated over 1 year ago

Learn about how to configure the Streaming Data Export integration in app!